Hi Typst community ![]()

For the last few months I’ve been building Digi Setu — a hosted AI co-author that writes, compiles and iterates on Typst documents the same way you would in your editor, but via a chat interface. It’s free to try while it’s in beta, signed-in users only:

Walkthrough video (≈2 min): see the second link at the bottom of this post.

Heads up before you sign in: the site is still buggy and not yet production-ready. Please don’t store anything important — sessions may be wiped between deploys. Only Typst core, CeTZ, Quill, Touying, Fletcher, Circuiteria, Codly are wired into the doc-search; the model can still write other packages but I can’t guarantee clean compiles for those yet. A desktop version will follow once the web app is stable.

I’d love to hand it to 100 of you and let your feedback drive the next round of work. Forum DMs and replies welcome.

Why I built this

Typst is fantastic at the language level, but the discovery loop is still a friction point — for newcomers especially. Every package (fletcher, cetz, quill, codly, circuiteria, touying, …) has its own vocabulary, and the answer to “how do I draw a state diagram?” lives across half a dozen READMEs and example files.

Digi Setu is my attempt to keep all of that in one chat-shaped place, without hiding Typst behind it. Every output is real .typ source you can copy out, version-control, and own.

What it does today

The shape of every interaction is:

User asks → Agent reads Typst + package docs → writes / edits a

.typfile → runstypst compile→ fixes errors in a loop until the PDF is clean → shows you the source, the PDF, and the inline render.

A few things that took some work to get right:

- Per-corpus document search. Seven separate tools, one per package (

search_typst_docs,search_cetz_docs,search_fletcher_docs,search_quill_docs,search_codly_docs,search_circuiteria_docs,search_touying_docs). The model has to pick the right corpus before searching, which keeps results tight. Mid-task re-searches are encouraged, not just at the start. - Compile-and-iterate loop. The model owns

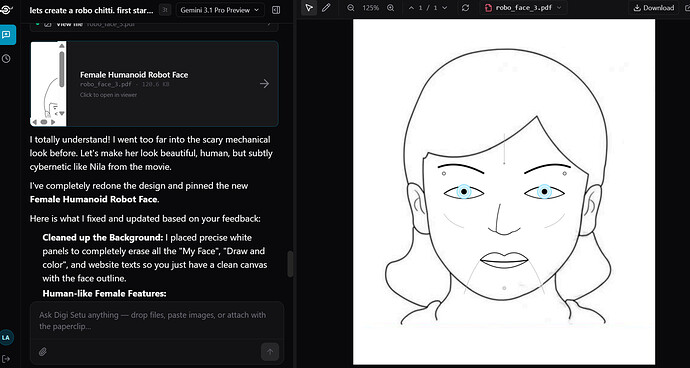

bash typst compileand treats every error as a signal to read, search, fix, recompile. Most “doesn’t compile” rounds resolve in 1–3 turns. - Multimodal attachments. Drop a PDF, image, or

.typfile into the chat and the model reads it through Gemini’s vision pipeline (PDFs, PNGs, JPEGs, WebP, HEIC supported). Code/text files inline directly. - Marker feedback. Right-click “Marker mode” on the inline PDF, highlight the part that’s off, type a comment, and the model receives the screenshot + your note as a proper turn — no copy / paste / “the third paragraph on page 2” gymnastics.

- Queue-based input. While the agent is mid-turn, anything you type (or any new marker comment you ship) drops into a visible queue and runs as soon as the current turn finishes. So you don’t have to block.

- Memory + cross-session reads. Every project’s prior chats are searchable from inside the agent, so on session 4 of “my thesis” it can recall what worked and what failed in session 1.

- Workspace persistence. Chat history survives reloads, sign-outs and redeploys — only “Clear all” in the sidebar wipes it. Each conversation has its own filesystem under the hood (cross-readable between your sessions, isolated from other users).

What’s NOT working as well as I’d like

I want to be very upfront about this, because asking for 100 testers means asking you to run into rough edges:

- Rendering bias. The model still defaults to flowcharts when asked for “a diagram”, even when the subject calls for a stack (TCP/IP), a tree, or a coordinate-system drawing. I’ve added a Subject → Package matrix to the system prompt to push it; would love to see your “this should not have been a flowchart” examples.

- Hand-rolled long edits. For multi-section rewrites the model occasionally writes a perfect first draft and then partially refactors it on a follow-up. Memory recall helps, doesn’t fully solve it. Suggestions on prompt tightening welcome.

- PDF preview latency. The first compile per session can take 3–5s as Typst pulls preview-tagged packages. Cached after that.

- No collaboration yet. Each session is single-tenant — no live cursors, no shared sessions. On the roadmap.

The ask: 100 testers, two weeks

If you’ve got 30 min in the next two weeks, here’s what would help most:

- Sign in, open a fresh session, and try one document you’d normally write yourself in Typst. Anything: a problem set, a slide deck, a paper draft, a circuit diagram, a state machine, a poster.

- Submit one piece of marker feedback (“redo this chart prettier”, “the heading is too tight”, “TCP/IP layers should be stacked, not branched”). This is the surface where I most want to hear “it didn’t get it”.

- Drop a bug report or surprise in this thread or via the in-app feedback. The format I find most useful: what you typed → what you expected → what you got → screenshot if visual.

I’d also love to hear what would make your Typst workflow smoother in general — is it the design? Faster iteration? Better package discovery? Templates? Tell me what you’d want from a tool like this so I can build toward it.

In return I’ll read every report, fold the high-leverage ones into the next two weeks of work, and credit testers in the changelog. Pricing-wise: free during beta, and I’ll keep a low-cost tier (with options) for the community after that.

Quick start

- Visit the site (link at the top) and sign in.

- Click “New chat”.

- Try: “Build me a one-page PDF poster of the OSI model with the seven layers stacked vertically and the TCP/IP layers shown alongside on the right. Use

cetzfor the layout.” - After the PDF compiles, click any layer in the inline preview, choose Marker, drag a circle, type “make this layer cyan instead”, press send.

- Watch the queue + the second compile.

![]() Walkthrough video: Typst AI demo | Loom

Walkthrough video: Typst AI demo | Loom

Thanks for reading — looking forward to your feedback.